Inclusive Design Arena

As a grad student in HCI, I was fascinated by UX Ethics, which led me to grab the opportunity to be a TA for the newest ‘UX Ethics class in our school taught by Dr. Lynn Dombrowski, who leads the social justice design lab at IUPUI. I learned more about what should not be done being an ethical human and as a designer. That said, teaching is more of learning. Also, I am glad to have taken Psychology for HCI by Dr. Karl Mac Dorman, which exposed me to human factors, perceiving human-like, machine-like interactions. I wanted to share my thoughts on ‘Responsible AI’ from the NLP meetup I attended.

Time to stitch the learnings

Let me start from the place I pondered a million times. Often, I wondered how the business mindset has made people think that organizations could be slightly using tactics to persuade people to sell their products. Every time people threw this question at me, I decided to look at it from a different perspective; being an advocate for people was also for the business. What to tradeoff keeping users and org in the center to help to navigate their lives more easily — leading me to be a part of the AI design conversation and engineer the design by having these conversations to make human interactions more conversational.

Why should we care about ethics in UX?

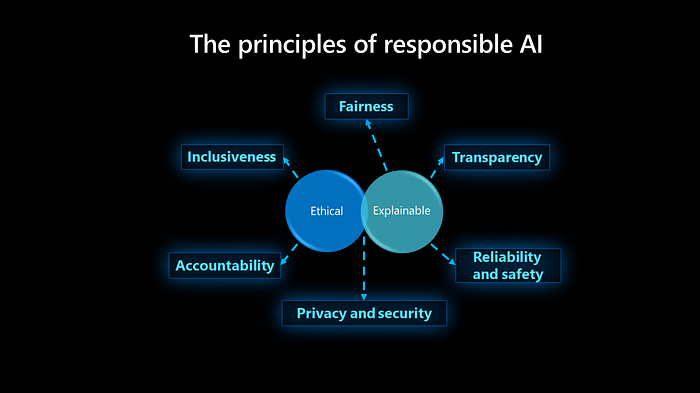

How many of us are accountable for designs we create for the next few decades? We designers are ingrained with a sense of togetherness in everything we do. We want to teach the technology to do good and learn well to be sustainable and inclusive of the diversity in the human race. Let’s be the enabler of that sort of technology with a purpose. I wanted to share the below masterpiece from legends to help design teams build ethical AI from the design meet.

Highlighting 3 conversations every AI product team needs to have

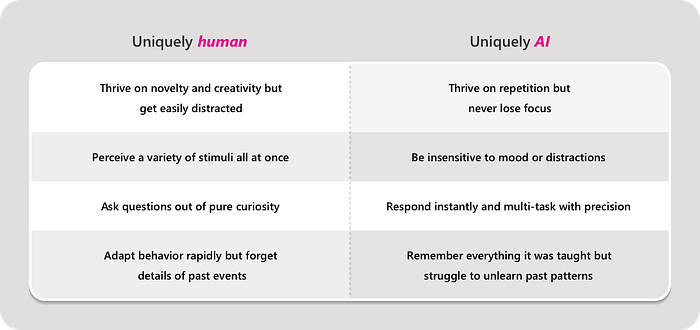

Conversation1: Capability

Clearly articulating what is uniquely human and what is uniquely AI about the product’s capability.

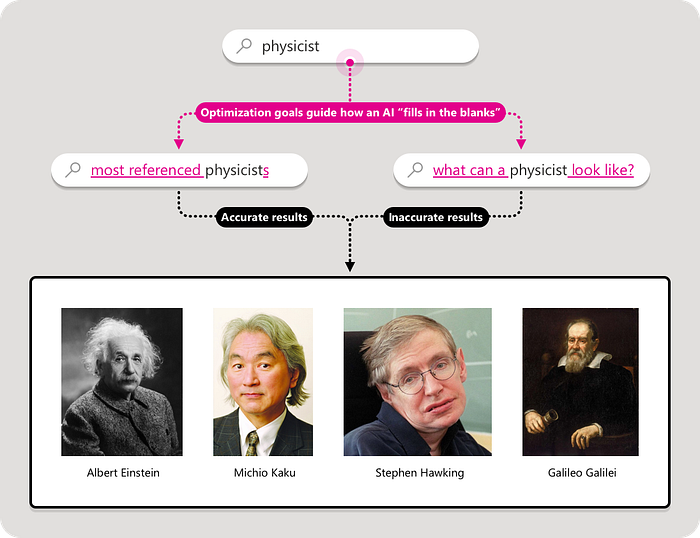

Conversation 2: Accuracy

Agreeing on measuring accuracy and inaccuracy of AI in products experience with the help of two lists.

Which operating conditions *should* play a role in the AI’s *accuracy*?

Which operating conditions *shouldn’t* play a role in the AI’s *inaccuracy*?

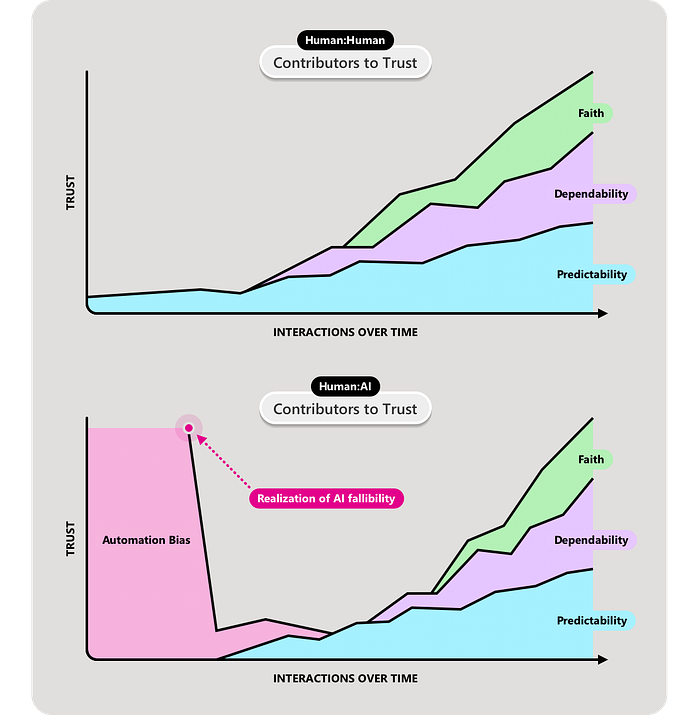

Conversation 3: Learnability

Approaching collaboration between people and AI as a shared intuition and learning. I am thankful to Dr. Saptarshi Purkayastha for giving me an opportunity to work on AI-Human collaboration in the radiology space. This blog helps me to revisit certain touchpoints in the future of the Human-AI collaboration. Working with probabilistic systems needs the application of sociology, psychology.

When two people meet for the first time, they don’t implicitly trust each other.

Dr. Arathi Sethumadhavan shares the process of building trust between people happens in two stages:

Building the mental model about AI idiosyncrasies helps predict what AI is likely to do and what not. Further, it helps in judging if it will fall into two failure modes.

Misuse: People using the AI in unreliable ways because they didn’t understand or appreciate its intended uses/limitations; or

Disuse: People not using the AI because its performance didn’t match their expectations about its intended uses/limitations.

Read more: https://uxdesign.cc/when-are-we-going-to-start-designing-ai-with-purpose-e196f986974b.

Thank you, Josh Lovejoy, for the meetup presentation and for sharing the blog. This is one of the thoughtful collections on my reading list.